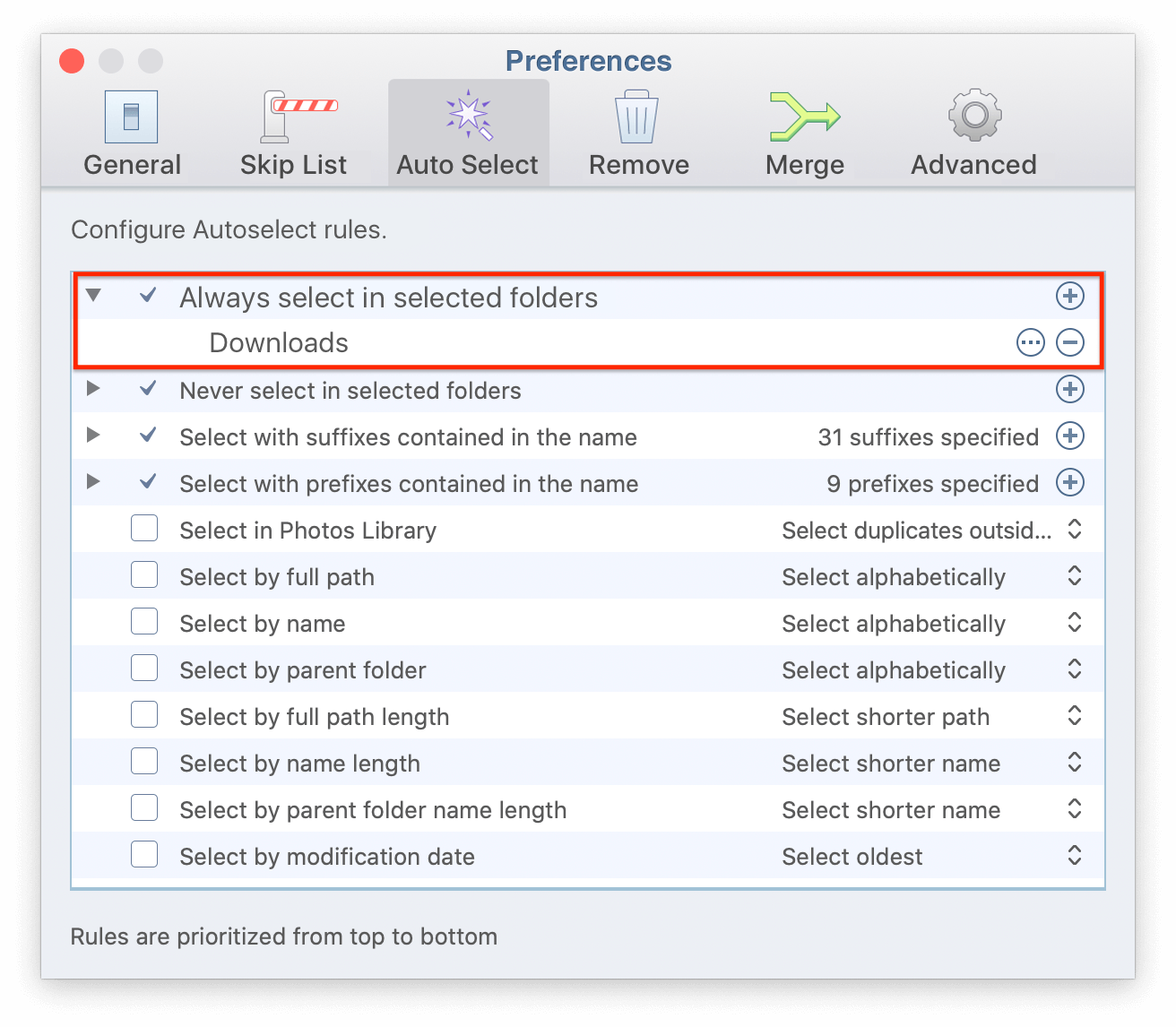

I have tried writing a Python script to clean up the list that fdupes outputs, but not with success. Within the browse window, you need to enter the folder you want to compare, then click Open. Most of the files should be duplicates between the two, but I suspect, that there are still some files only on the other path, so that I don't want to just remove it.Īre there perhaps some options to fdupes to achieve this, that I have overlooked? I need to do this, to prepare two large directory-structure that have gotten out of sync, where one is more up-to-date than the other (this would be my reference). However what I need/want is to search for duplicates against a reference path, where I can define one path to be the reference path, and search inside the other path, for files that exist in the reference path in order to remove them.

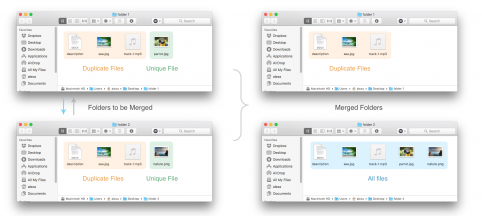

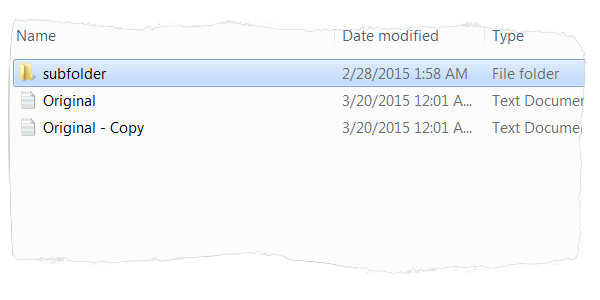

Authors Bottom If you have a few years of experience in the Linux ecosystem, and you’re interested in sharing that experience with the community, have a look at our Contribution Guidelines. While the files are selected, delete them. 2) In Windows Explorer: Move files from the Temporary Folder to Folder 2 and replace the existing files. 1) In command line: forfiles /p C:\folder1 /s /c 'cmd /c copy path C:\temporaryfolder'. For one of the projects, we needed a PowerShell script that would find duplicate files in the network folders of the server. However, from what I have seen, these will find all duplicates of the selected directory-structures/search paths and thus also duplicates that exist inside only one of the search-paths (if you select multiple). In this tutorial, we’ve learned how to find duplicate files in Unix systems using the file name, checksum, fdupes, and jdupes. The files that were moved were deleted because they were already in Folder 1. There are a couple of duplicate file finders for Linux listed e.g. Recover wasted disk space on your HDD, SSD, or in the Cloud Storage and speed up your computer by removing duplicate files.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed